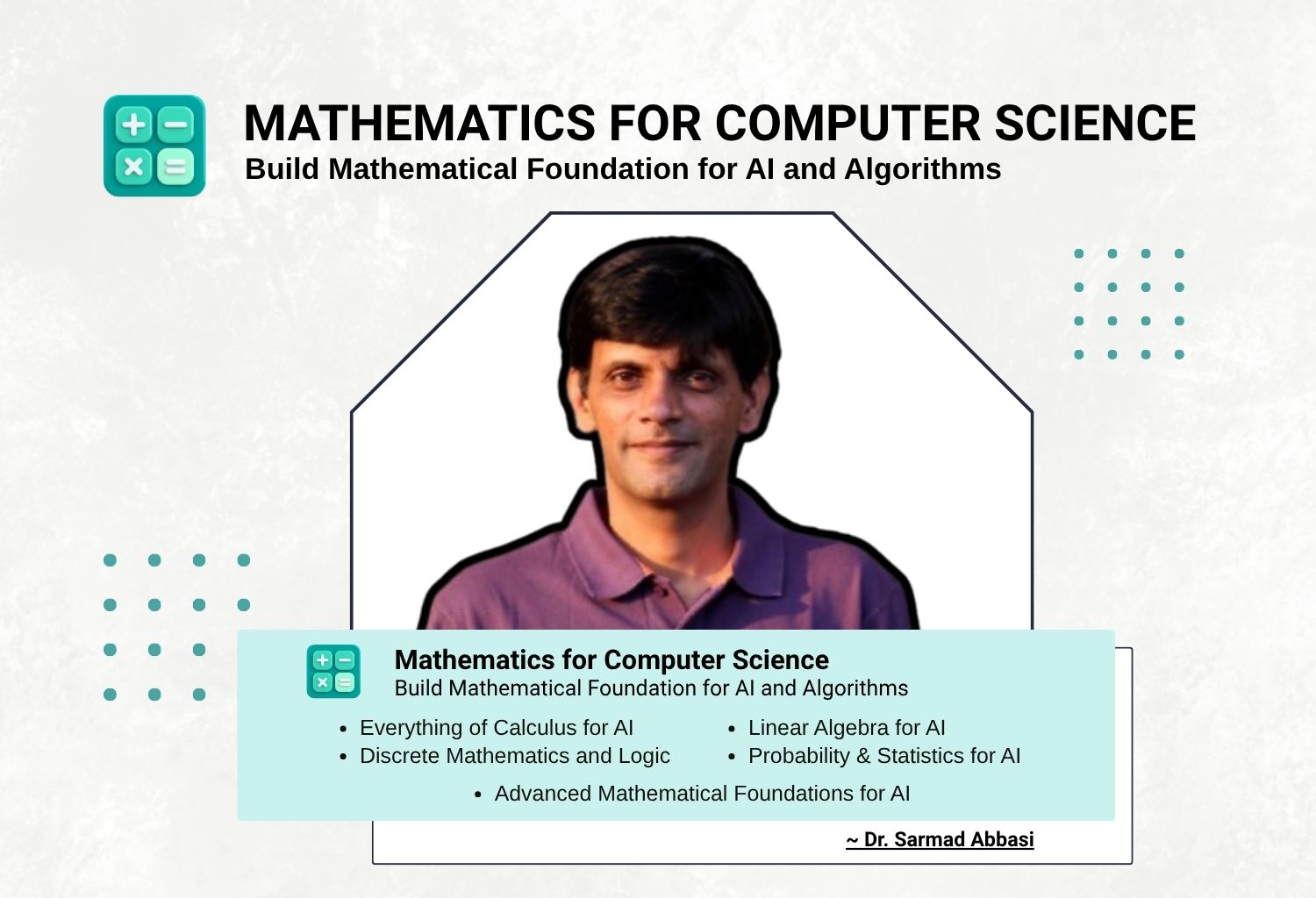

Module 1: Discrete Mathematics for AI

Lecture 1 — Foundations of Logic, Proofs & Sets

- Core Concepts: Propositions, truth tables (AND, OR, XOR, NOT), logical implication, equivalence, and quantifiers (∀, ∃). Direct proofs vs. contradiction. Proof of the prime factor bound — why checking divisors only up to √N is sufficient.

- Intuition: Building the “grammar” of mathematics to define truth and falsehood clearly.

- AI/CS Link: Logical rules form the basis of rule-based systems; quantifiers define model assumptions.

Lecture 2 — Mathematical Induction & Recurrence Relations

- Core Concepts: The principle of mathematical induction, induction on sequences, and relating recursion to induction. Key proofs:

- Arithmetic Series: sum = n(n+1)/2

- Geometric Series: sum = a(rⁿ−1)/(r−1) and their practical impact

- Intuition: Using a “domino effect” logic to prove that a property holds for all infinite steps of a process.

- AI/CS Link: Induction is used to prove convergence of iterative algorithms and correctness of recursive functions.

Lecture 3 — Growth Rates & Computational Complexity

- Core Concepts: Big O, Big Theta, and Big Omega notation. Comparing function classes: Constant, Logarithmic, Linear, n log n, Quadratic, Exponential.

- Intuition: Understanding how time/space required by an algorithm scales with input size.

- AI/CS Link: Vital for evaluating scalability of training algorithms and efficiency of model inference.

Lecture 4 — Advanced Set Theory & Relations

- Core Concepts: Set operations, Cartesian products, and power sets.

- Key relation properties: Closure, Transitivity, Symmetry, Reflexivity.

- Intuition: Organizing data into collections and defining how elements within them interact.

- AI/CS Link: Set operations used for dataset splits (train/test/val) and defining feature sets.

Lecture 5 — Counting, Combinatorics & Binomials

- Core Concepts:

- Sum, Product, Subtraction, and Division rules

- Permutations and combinations

- Binomial Theorem

- Inclusion-Exclusion principle and its generalization

- Intuition: Calculating the number of possible outcomes or configurations in a finite system.

- AI/CS Link: Model capacity, parameter search space, and number of possible token sequences in NLP.

Lecture 6 — Graph Theory Foundations

- Core Concepts: Nodes, edges, directed vs. undirected graphs, adjacency matrices, paths, and connectivity.

- Intuition: Representing complex relationships between entities as a network.

- AI/CS Link: Neural networks as computational graphs; knowledge graphs for semantic relationships.

Lecture 7 — Trees, DAGs & Topological Sorting

- Core Concepts:

- Tree structures and Directed Acyclic Graphs (DAGs)

- Topological sorting

- Traversal algorithms: DFS and BFS in grids and general graphs

- Intuition: Modeling hierarchical data and processes with a specific execution order.

- AI/CS Link: Backpropagation is a reverse traversal on a DAG; transformers utilize attention graphs.

Lecture 8 — Formal Languages & Automata for AI

- Core Concepts: Finite State Machines (FSM), regular expressions, and the relationship between logic and formal languages.

- Intuition: Defining the rules and structures that generate valid sequences of data.

- AI/CS Link: Essential for understanding tokenization in LLMs and the logic of string processing.

Module 2: Calculus for AI

Lecture 1 — Limits, Continuity & Derivative Foundations

- Core Concepts: Definitions of limits (0/0, ∞/∞), Sandwich Theorem. The 6 basic functions and their derivatives: xⁿ, sin, cos, eˣ (and aˣ), ln x, step/|x| functions.

- Intuition: Measuring how a function changes at an infinitesimal point.

- AI/CS Link: Limits are necessary to define derivatives used in gradient descent.

Lecture 2 — Rules of Differentiation & The Chain Rule

- Core Concepts: The 6 rules of differentiation: Sum, Product, Quotient, Inverse, Chain Rule, L’Hôpital’s Rule.

- Intuition: Calculating the rate of change for complex, nested functions.

- AI/CS Link: The Chain Rule is the mathematical engine behind backpropagation in neural networks.

Lecture 3 — Optimization: Critical Points & Curvature

- Core Concepts:

- Finding maxima, minima, and inflection points

- Second derivative test; Newton’s method

- All Values Theorem and Mean Value Theorem

- Intuition: Identifying the “peaks” and “valleys” of a function to find its best value.

- AI/CS Link: Training a model involves optimizing a loss function to find its global or local minimum.

Lecture 4 — Taylor Series & Linearization

- Core Concepts: Taylor Series expansions, first-order Taylor (linearization), and the Binomial Theorem.

- Intuition: Approximating complex non-linear functions with simpler polynomials.

- AI/CS Link: Linearization lets us understand the local geometry of a high-dimensional loss surface.

Lecture 5 — Integration & The Fundamental Theorem of Calculus

- Core Concepts: Riemann sums, Fundamental Theorem of Calculus I & II, and integral intuition.

- Intuition: Calculating the total accumulated value over an interval (area under a curve).

- AI/CS Link: Integrals define expectations in probability and are core to diffusion models.

Lecture 6 — Multivariable Calculus: Partial Derivatives & Gradients

- Core Concepts: Partial derivatives, the Gradient vector, and the Multivariable Chain Rule.

- Intuition: Understanding how a function of many variables changes as each variable is tweaked individually.

- AI/CS Link: Gradients tell us how to update every parameter in a model simultaneously.

Lecture 7 — The Jacobian & Vector-Valued Functions

- Core Concepts: Defining the Jacobian matrix and its role in multivariable transformations.

- Intuition: Extending the derivative to functions that map multiple inputs to multiple outputs.

- AI/CS Link: Crucial for understanding gradient flow through layers and activations in Deep Learning.

Lecture 8 — Convexity & High-Dimensional Optimization

- Core Concepts: Definition of convexity, the Hessian matrix, and its intuition in loss surface geometry.

- Intuition: Determining if a function is “bowl-shaped,” guaranteeing any local minimum is global.

- AI/CS Link: Convex optimization ensures stable and reliable training for many ML algorithms.

Module 3: Probability Foundations for AI

Lecture 1 — Discrete Probability & Kolmogorov Axioms

- Core Concepts: Basic probability, expectation, variance, and standard deviation. Kolmogorov axioms, sample spaces, and connecting probability to counting with several examples.

- Intuition: Quantifying uncertainty in a mathematically rigorous way.

- AI/CS Link: Provides the foundation for all probabilistic modeling and data science.

Lecture 2 — Conditional Probability & Bayes’ Theorem

- Core Concepts: Conditional probability, Law of Total Probability, Bayes’ Theorem. Application: designing spam filters.

- Intuition: Updating our beliefs about an event based on new incoming evidence.

- AI/CS Link: Used in Bayesian inference and posterior updates.

Lecture 3 — Random Variables: Expectation & Variance

- Core Concepts: Discrete and continuous random variables, PDF vs. PMF, expectation, variance, and standard deviation.

- Intuition: Summarizing a complex distribution with a single average value and a measure of spread.

- AI/CS Link: Expectation is used to calculate the average loss of a model over a dataset.

Lecture 4 — Continuous Distributions & The Gaussian

- Core Concepts: Uniform, Exponential, and Gaussian (Normal) distributions; the Multivariate Gaussian.

- Intuition: Modeling real-world data that clusters around a central mean.

- AI/CS Link: Gaussian distribution is a standard prior in VAEs and the basis for noise in diffusion models.

Lecture 5 — Covariance & High-Dimensional Geometry

- Core Concepts: Covariance and correlation matrices and their geometric interpretation.

- Intuition: Measuring how two or more variables change together.

- AI/CS Link: Covariance matrix is central to latent space modeling and feature redundancy analysis.

Lecture 6 — Limit Theorems & Sampling

- Core Concepts: Law of Large Numbers (LLN), Central Limit Theorem (CLT), and Monte Carlo sampling basics.

- Intuition: Understanding why many small, independent random factors average out into a bell curve.

- AI/CS Link: Provides intuition behind Batch Normalization and stochastic optimization (SGD).

Lecture 7 — Likelihood & Parameter Estimation

- Core Concepts: Likelihood and log-likelihood functions; derivation of Maximum Likelihood Estimation (MLE).

- Intuition: Finding the model parameters that make the observed data most likely.

- AI/CS Link: MLE is the fundamental principle used to derive loss functions like Mean Squared Error.

Lecture 8 — Information Theory: Entropy & Divergence

- Core Concepts: Entropy, Cross-Entropy, KL Divergence, Evidence Lower Bound (ELBO).

- Intuition: Measuring the information content of a distribution or the distance between two distributions.

- AI/CS Link: Cross-entropy is the standard loss for classification; KL divergence is used in VAEs and RLHF.

Module 4: Linear Algebra for AI

Lecture 1 — Vectors, Norms & Dot Products

- Core Concepts: Vector spaces, L1/L2 Norms, dot products, and cosine similarity.

- Intuition: Representing data points as positions and directions in space.

- AI/CS Link: Embeddings (word/image vectors) rely on norms and similarity for retrieval and comparison.

Lecture 2 — Matrices & Linear Transformations

- Core Concepts: Matrix-vector multiplication, linear transformations, rank, and matrix inverse.

- Intuition: Using matrices to rotate, scale, or project data into new coordinate systems.

- AI/CS Link: Each neural network layer is a linear transformation followed by a non-linearity.

Lecture 3 — Systems of Linear Equations

- Core Concepts: Row Echelon Form (REF), Gaussian elimination, and solving Ax = b.

- Intuition: Finding the intersection of multiple hyperplanes.

- AI/CS Link: Used in Linear Regression and weight updates in certain optimization methods.

Lecture 4 — Subspaces, Orthogonality & Projections

- Core Concepts: Basis, subspaces, orthogonality, and projection of a vector onto a subspace.

- Intuition: Finding the “shadow” of a high-dimensional vector in a lower-dimensional space.

- AI/CS Link: Transformer projections and dimensionality reduction rely on these geometric principles.

Lecture 5 — Eigenvalues & Eigenvectors

- Core Concepts: Characteristic equation, Eigendecomposition, and the intuition of invariant directions.

- Intuition: Finding the natural axes of a transformation where data is only scaled, not rotated.

- AI/CS Link: Central to stability of Recurrent Neural Networks (RNNs) and spectral clustering.

Lecture 6 — Singular Value Decomposition (SVD)

- Core Concepts: SVD of a matrix, singular values, and the relationship between SVD and Eigendecomposition.

- Intuition: Decomposing any matrix into three operations: rotation, scaling, and rotation.

- AI/CS Link: Used for matrix compression, denoising, and Low-Rank Adaptation (LoRA) of LLMs.

Lecture 7 — Principal Component Analysis (PCA)

- Core Concepts: Low-rank approximation using SVD and derivation of PCA from covariance.

- Intuition: Identifying directions in a dataset that contain the most variance/information.

- AI/CS Link: A primary tool for data visualization and feature reduction in Data Science.

Lecture 8 — Tensors & Multilinear Algebra

- Core Concepts: High-dimensional arrays (tensors), tensor products, and attention weights geometry.

- Intuition: Generalizing vectors (1D) and matrices (2D) to arbitrary dimensions.

- AI/CS Link: All modern Deep Learning frameworks (PyTorch/TensorFlow) operate on tensors; attention mechanisms use tensor products.

🧠 OVERALL STRUCTURE

| Month | Focus |

|---|---|

| 1 | Mathematical Foundations (LA + Calculus compressed) |

| 2 | Probability & Density Estimation |

| 3 | Optimization & Core ML |

| 4 | Generative Models (VAE, Diffusion) |

| 5 | Deep Learning Systems & Transformers |

| 6 | Large Language Models (LLMs) & Deployment |

📘 MONTH 1 — Linear Algebra + Calculus for ML (Compressed & Focused)

Goal: Only what’s needed for ML.

Week 1 — Vectors, Geometry, Matrix Operations

Math:

- Vector spaces

- Norms

- Dot product

- Matrix multiplication

- Linear transformations

NumPy Lab:

- Implement matrix multiplication from scratch

- Build Nearest Neighbor Classifier

- Visualize 2D transformations

Torch Lab:

- GPU vs CPU tensor operations

- Batch operations & broadcasting

Mini Project:

- Implement KNN classifier fully in NumPy

Week 2 — Eigenvalues, SVD, PCA (Combined)

Math:

- Eigen decomposition

- SVD

- PCA derivation via SVD

- Low-rank approximation

NumPy Lab:

- Implement PCA manually

- Image compression via SVD

Torch Lab:

- PCA on high-dimensional dataset

- Compare reconstruction errors

Week 3 — Multivariable Calculus & Gradients

Math:

- Partial derivatives

- Gradient

- Jacobian intuition

- Chain rule

- Backprop idea

NumPy Lab:

- Compute gradients numerically

- Implement gradient descent

- Visualize 3D loss surface

Torch Lab:

- Autograd exploration

- Manual vs autograd gradient comparison

Week 4 — Optimization Theory

Math:

- Convexity

- SGD

- Momentum

- Adam

- Convergence behavior

NumPy Lab:

- Implement SGD, Momentum, Adam

- Compare convergence rates

Torch Lab:

- Custom optimizer implementation

- Learning rate scheduling

📊 MONTH 2 — Probability & Density Estimation (Core Spine)

This is the backbone of the course.

Week 5 — Probability Foundations

- Random variables

- PMF vs PDF

- Expectation

- Variance

- Covariance

- Law of Large Numbers

NumPy Lab:

- Monte Carlo simulation

- Estimate expectation via sampling

Torch Lab:

- torch.distributions exploration

Week 6 — Gaussian & Multivariate Distributions

- Multivariate Gaussian

- Covariance matrix geometry

- Likelihood function

NumPy Lab:

- Implement multivariate Gaussian PDF

- Visualize contour plots

- Compute log-likelihood

Torch Lab:

- Fit Gaussian to data via gradient descent

Week 7 — Maximum Likelihood Estimation

- MLE derivation

- Linear regression via MLE

- Logistic regression via MLE

NumPy Lab:

- Linear regression closed-form

- Logistic regression from scratch

Torch Lab:

- Build training loop

- Loss functions

- Model checkpointing

Week 8 — Latent Variables & EM

- Mixture models

- EM algorithm derivation

- GMM as density estimator

NumPy Lab:

- Implement EM from scratch

- Visualize clustering

Torch Lab:

- Log-sum-exp trick

- Stable implementation

🤖 MONTH 3 — Neural Networks & Generalization

Week 9 — Neural Networks from Scratch

- Backprop derivation

- Activation functions

- Loss functions

NumPy Lab:

- 2-layer NN with manual backprop

Torch Lab:

- Same network in nn.Module

Week 10 — Regularization & Generalization

- Bias-variance

- L1, L2

- Dropout

- BatchNorm

Lab:

- Overfitting experiment

- Regularization comparison

Week 11 — CNNs

NumPy Lab:

- Implement convolution manually

Torch Lab:

- Build CNN for CIFAR/MNIST

- Train & evaluate

Week 12 — Density Estimation with Neural Networks

- Autoregressive models

- Normalizing flows (intro)

- Energy-based models (intuition)

Torch Lab:

- Simple autoregressive density model

- Log-likelihood training

🎨 MONTH 4 — Generative AI

Week 13 — Variational Autoencoders

- ELBO derivation

- KL divergence

- Reparameterization trick

NumPy Lab:

- Derive KL divergence manually

- Visualize latent space

Torch Lab:

- Full VAE implementation

- Sample new images

Week 14 — Diffusion Models

- Forward process

- Reverse process

- Noise prediction objective

Torch Lab:

- Train simple diffusion on MNIST

- Visualize denoising process

Week 15 — Conditional Generation

- Conditional VAE

- Conditional diffusion

- Classifier-free guidance

Lab:

- Conditional image generation

Week 16 — Evaluation of Generative Models

- FID score

- Likelihood vs perceptual quality

- Mode collapse

Lab:

- Compare VAE vs diffusion outputs

🧠 MONTH 5 — Transformers & Deep Learning Systems

Week 17 — Attention Mechanism

NumPy Lab:

- Implement scaled dot-product attention from scratch

Torch Lab:

- Multi-head attention module

Week 18 — Transformer Architecture

- Encoder

- Decoder

- Positional encoding

Torch Lab:

- Build mini transformer

- Train small language model

Week 19 — Training at Scale

- Mixed precision

- Gradient clipping

- Checkpointing

- Experiment tracking

Lab:

- Train transformer with logging

- Resume from checkpoint

Week 20 — Deployment

- TorchScript

- ONNX

- FastAPI

- Docker

- Model versioning

Lab:

- Deploy transformer as REST API

- Containerize with Docker

🚀 MONTH 6 — Large Language Models (LLM Engineering)

This month makes them industry-ready.

Week 21 — LLM Internals

- Causal language modeling objective

- Tokenization (BPE, SentencePiece)

- Scaling laws

- Pretraining vs finetuning

Lab:

- Train tiny GPT from scratch (small dataset)

- Implement tokenizer

Week 22 — Fine-Tuning LLMs

- LoRA

- QLoRA

- Instruction tuning

- Prompt engineering

Lab:

- Fine-tune open LLM (e.g., Llama or Mistral)

- Build task-specific chatbot

Week 23 — RAG Systems

- Embeddings

- Vector databases

- Retrieval pipeline

- Hybrid search

Lab:

- Build RAG system with:

- Open-source LLM

- FAISS

- Document ingestion pipeline

Week 24 — LLM Deployment & Optimization

- Quantization

- vLLM

- Inference optimization

- API deployment

- Safety & alignment basics

Final Lab:

- Deploy production-ready LLM API

- Implement:

- Rate limiting

- Logging

- Monitoring

🎓 CAPSTONE PROJECT OPTIONS

Students must deliver:

- Research report (math + theory)

- GitHub repo (clean structure)

- Dockerized deployment

- Technical presentation

Possible Tracks:

- Custom diffusion model

- End-to-end RAG system

- Fine-tuned domain LLM

- Density estimation research project

- Vision-language model

🧪 LAB STRUCTURE TEMPLATE (Standardized)

Every lab includes:

- Mathematical derivation section

- NumPy implementation

- Torch production implementation

- Benchmarking

- Debugging exercises

- Reflection questions

📊 Evaluation Scheme

| Component | Weight |

|---|---|

| Weekly Labs | 30% |

| Midterm (Math + Coding) | 20% |

| Mini Project (Month 3) | 15% |

| Generative Model Project | 15% |

| Final Capstone | 20% |

🛠 Tool Stack

- Python

- NumPy

- PyTorch

- HuggingFace

- FAISS

- FastAPI

- Docker

- Weights & Biases

- Git

🎯 Final Graduate Profile

After 6 months, students can:

- Derive MLE & ELBO

- Implement backprop manually

- Train diffusion models

- Understand transformer math

- Fine-tune LLMs

- Build RAG systems

- Deploy AI systems

- Debug training instability

- Work as ML / GenAI Engineer

📘 Course Outline

📅 Month 1: Programming Fundamentals

Week 1: Loops, Functions & Basics

- Lecture 1: Environment Setup, Data Types, Conditionals, Loops

- Lecture 2: Functions, Recursion, Time Complexity

- Lab: Shapes Printer, Console Animation

Week 2: Arrays & Sorting

- Lecture 1: Arrays, Strings, 2D Arrays, Searching

- Lecture 2: Sorting Algorithms, Merge & Quick Sort

- Lab: Voting System, Snakes & Ladders

Week 3: Pointers & Memory

- Lecture 1: Pointers, Stack vs Heap, Dynamic Memory

- Lecture 2: Function Pointers, Const & Smart Pointers

- Lab: Gomoku Game

Week 4: Structs & Chess Project

- Lecture 1: Structs, Enums, Data Modeling

- Lecture 2: File Handling, Function Pointer Dispatch

- Lab: Chess Game (Procedural)

📅 Month 2: OOP & Design Patterns

Week 5: Classes & OOP Basics

- Class Structure, Constructors, Destructors

- Lab: Polynomial Calculator

Week 6: Operator Overloading

- Arithmetic & Advanced Overloading

- Lab: Huge Integer Project

Week 7: Relationships & STL

- Object Relationships, Design Patterns

- Lab: LUDO Game

Week 8: Inheritance & Polymorphism

- OOP Concepts, Behavioural Patterns

- Lab: Chess (OOP Version)

📅 Month 3: Data Structures & STL

Week 9: Stack, Queue, Vector

- Implementation and STL mapping of basic data structures

Week 10: Linked Lists

- Singly, Doubly, and Circular Linked Lists with applications

Week 11: Trees

- Binary Trees, BST, AVL, Heaps, and Tries

Week 12: Hashing

- Hash tables, collision handling, real-world use cases

📅 Month 4: Algorithms

Week 13: Divide & Conquer

- Recurrence relations and divide & conquer strategies

Week 14: Dynamic Programming

- Optimization using DP (1D, 2D, advanced patterns)

Week 15: Greedy Algorithms

- Greedy strategies and optimization problems

Week 16: Graph Algorithms

- BFS, DFS, shortest paths, graph problems

📅 Month 5: Operating Systems, Databases & System Design

Week 17: Operating Systems

- Processes, scheduling, threads, synchronization

Week 18: Memory & File Systems

- Memory management and file handling systems

Week 19: Databases (SQL)

- SQL, indexing, transactions, database design

Week 20: System Design

- Scalable systems, architecture, design patterns

📅 Month 6: Mock Interviews

Week 21: DSA Mock Interviews

- Practice real interview-style coding problems

Week 22: System Design Interviews

- Mock interviews focused on system design

Week 23: Behavioural Preparation

- Communication skills, HR questions, STAR method

Week 24: Final Mock & Launch

- Full interview simulation and final preparation